Mirrors All the Way Down

Why LLMs and Humans Cannot Both Be Stochastic Parrots

It is often the case that one hears adherents to some variety of the computational theory of mind argue, “Why is the reductionism about LLMs only one-sided? Perhaps statistical manipulation of large amounts of textual data is all that humans do too.” This riposte is off the mark however, for if all there is on both sides is mere mechanical manipulation of inert “data” on the basis of proximity to other “data”, then the very claim winds up refuting itself: at some point, for any of the products of such mechanical manipulation to actually have any inherent meaning as identical to some reality, there must have been a true act of intentionality towards the world, constituting it as it is and interpreting it truly and rationally according to supra-material concepts and categories. Again, the denial that this is possible at all is inherently self-vitiating, always depending on the very thing it denies; to question whether humans can intentionally grasp reality must always already presuppose that they can, for if it were not the case that they could, then one would have understood some meaning about reality as it is, that it is not the case that humans can understand reality, which is nothing but a performative contradiction.

Humans, then, must be intrinsically capable of constituting some one thing about reality as it is (such that the world does conform to consciousness). Yet LLMs need not, and that they in fact do not could not in turn cause us to turn the question back on ourselves. For instance, if “horse” were to show up on your screen in a “conversation” with an AI chatbot, for that symbol to actually possess any intelligible meaning rather than just be a sign pointing to nowhere, a string of characters designating no thing at all instead of actually referencing some specific quadrupedal mammal, defined by its properties and relations and genus and species, there need at some point have occurred some rational grasping of that concept, an achievement of identity between internal expression and external reality, along with the latter’s constitutive concepts and categories as one instantiation of them.

From this, it would not be sufficient to constitute “knowledge,” as LLMs do, merely to situate that set of characters more closely to another set, and these in relative proximity to other strings of characters, and so on at a gargantuan scale, thereby producing the semblance of semantic cognizance and conscious apprehension of reality, for here we have defined the capacity to know as the achievement of conformity between meaning and subjective intention.1 If the world is at all, in any manner, reflected in the output of LLMs, then at some juncture there must exist a faculty of intussusceptive awareness with regard to reality; if not in LLMs, then necessarily in us who’ve created them. It simply does not follow and logically cannot be that if an LLM is a pure exteriority mechanically producing a mimicry of conscious apprehension, then so must be the human being; this posit does not seem so much buoyed by coherent reasoning as it is by a fashionable anthropological pessimism, a self-conscious attempt at what passes for cosmic modesty in the twenty-first century. If it is so for the human being, then just as LLMs do not intussusceptively grasp reality, neither do humans; but if that is so, then reality is unknowable. But if reality is said to be unknowable, then something fundamental about reality has been apprehended; it follows that reality is knowable, and as knowability exists relative to knowledge, there must be such a thing as subjective mind, as intrinsic intentionality towards meaning, even in principle, whether in actuality or in potency.

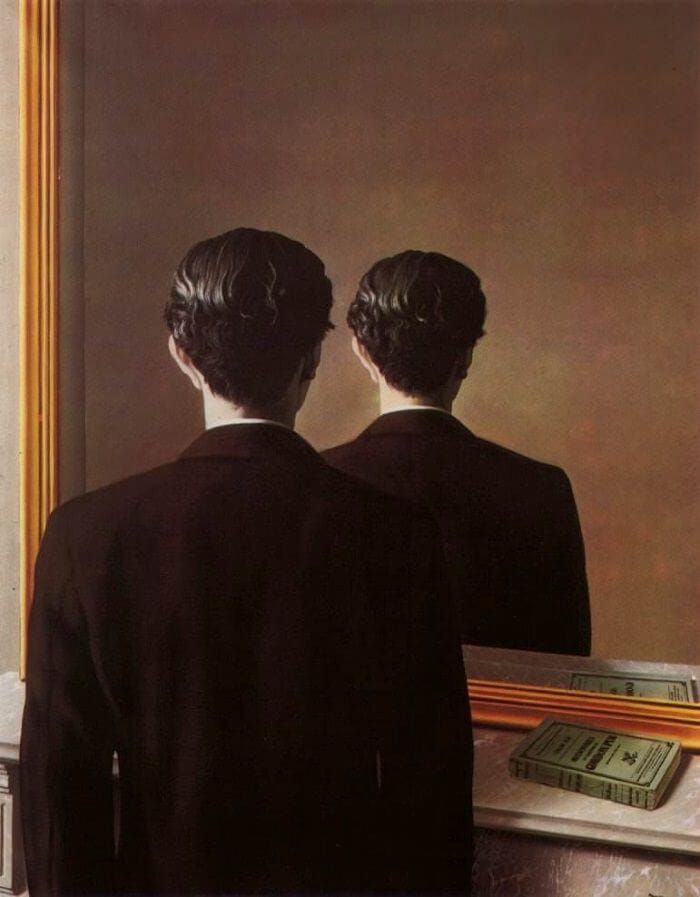

That necessary, intentional grasp of meaning for any truth or actual reality at all to be truthfully designated is possible only if we, who have made LLMs, do understand reality as capable of such an act of intrinsic intentionality towards the world’s intelligibility and on the other side are being mirrored in what looks like a simulation of consciousness yet remains a mechanical production of the outcomes of real consciousness, of real intentional understanding of reality, but not if on both sides, all the way down, are nothing other than algorithmic processes which display at most the illusion of conscious apprehension of what exists without ever being actually so. It would be like two mirrors facing each other but no light there to be reflected at all.

Something of the kind, I submit, beleaguers all computational accounts of mind, as for instance Daniel Dennett’s, who holds that consciousness is an “illusion” in the sense that there’s no unified subject actually experiencing the world but a series of connected operations in the brain of judgement, comparison, adjudication, and decision making “filing reports,” so to speak, that create a veil of unified selfhood. Now, I believe classical metaphysical arguments are sufficient to dispel this as logically naïve.2 Any multiplicity of essential properties, functions, or realities must be embraced within a more original metaphysical character that is immanent to them all as constituting them, excluding none, all while transcending each and the totality. Yet what strikes me about this argument is what Dennett is implicitly affirming. Bracketing the issue of conscious unity, under classical metaphysics (and certain strains of Indian philosophy), the manner in which they speak about to-be-ness (be-ing, être, esse, Sein) as inherently given and received shows us that even in that respect alone Dennett already transgresses the limits of reductionistic materialism because he imputes (a) depth(s) of subjective interiority that “takes in” what is there. He still cannot account for subjective interiority (reception of being) constituted of inherent intentionality—and, for the reasons mentioned above, any “emergent” intentionality towards meaning would always have to be preceded by an intrinsic intention to even be logically or ontologically possible—towards being only beginning with objective exteriority, but only relegates it to constituent parts of a physical organ. (Any such “constituent functions” in a computational account of mind, therefore, explain nothing, really, because they lead only to an infinite regress of component parts responsible for ever more minute and disintegrated “tasks,” such that one never actually arrives at an integrally one place where a judgement about “external” reality is made; whatever can unite these disparate parts cannot just be a whole composed of them [as the brain is] but must be a simplicity transcending the compositeness and accounting for its unit. Thus, that the brain cannot account for consciousness is not a conclusion from gaps in neurological research but a logical one from mereology.)

Yet, in all this, the principle of subjective interiority remains instantiated nonetheless, and the computationalist has not accounted, logically, for how that may be derived from externality alone.

As I have implied, computational accounts of mind tend to subtly impute subjective interiority to computers/AI through metaphors of interior depth without actually accounting for the initial logical possibility of that (which is distinct from any putative evolutionary reconstruction). And in turn, the computationalist believes that by partitioning the products of consciousness, he has reduced it to nothing but a series of mechanical operations, despite that all these nonetheless presuppose intussusceptive awareness which has not been justified by what remains a reductively physicalist model of matter subject only to efficient forces, devoid of intrinsic intentionality towards intelligible formal or final causes.

If these any putative set of “operations,” then, grasp reality, render judgements about it, then conscious interiority is necessarily instantiated in principle, for reality is nothing other than what is subject to some apprehending judgement, possessing some expressible predicate, and this always already requires a principle of subjective depth that reaches “out of itself” in its innate intentionality towards being in order to grasp it and adjudicate about it.

Cf. Aristotle, On the Soul III.4, 429a10-430a10.

Plato, Parmenides 158a-b, 159d; Aristotle, Categories 1a20-25; Plotinus, VI.9.9.1; Proclus, Elements of Theology A, prop. 1; cf. W. Norris Clarke, The One and the Many (University of Notre Dame Press, 2001), pp. 61-2.